The recent focus on George Mason University’s investigation into plagiarism allegations concerning the Wegman “hockey stick” report and related scholarship has led to some interesting reactions in the blogosphere. Apparently, this involves trifling attribution problems for one or two paragraphs, even though the allegations now touch on no less than 35 pages of the Wegman report, as well as the federally funded Said et al 2008. Not to mention that subsequent editing has also led to numerous errors and even distortions.

But we are also told that none of this “matters”, because the allegations and incompetence do not directly touch on the analysis nor the findings of the Wegman report. So, given David Ritson’s timely intervention and his renewed complaints about Edward Wegman’s lack of transparency, perhaps it is time to re-examine Wegman report section 4, entitled “Reconstructions and Exploration Principal Component Methodologies”. For Ritson’s critique of the central Wegman analysis itself remains as pertinent today as four years ago, when he expressed his concerns directly to the authors less than three weeks after the release of the Wegman report.

Ritson pointed out a major error in Wegman et al’s exposition of the supposed tendency of “short-centred” principal component analysis to exclusively “pick out” hockey sticks from random pseudo-proxies. Wegman et al claimed that Steve McIntyre and Ross McKitrick had used a simple auto-regressive model to generate the random pseudo-proxies, which is the same procedure used by paleoclimatologists to benchmark reconstructions. But, in fact, McIntyre and McKitrick clearly used a very different – and highly questionable – noise model, based on a “persistent” auto-correlation function derived from the original set of proxies. As a result of this gross misunderstanding, to put it charitably, the Wegman report failed utterly to analyze the actual scientific and statistical issues. And to this day, no one – not Wegman, nor any of his defenders – has addressed or even mentioned this obvious and fatal flaw at the heart of the Wegman report.

Wegman et al begin the section with an incomplete (and somewhat misleading) account of the role of principal component analysis (PCA) in Mann et al’s reconstruction methodology.

But for now, I’ll skip ahead to the discussion of McIntyre’s results, based on the analysis in the 2005 GRL paper, “Hockey sticks, principal components, and spurious significance”.

While at first the McIntyre code was specific to the file structure of his computer, with his assistance we were able to run the code on our own machines and reproduce and extend some of his results.

The four figures which follow apparently demonstrate Wegman et al’s ability to “reproduce” McIntyre’s “results”. (After that, Wegman goes on to “extend” the results by incorporating the infamous “cartoon” from the first IPCC report, a passage with its own problems that I’ll leave for another time).

Describing the first figure, Wegman et al say:

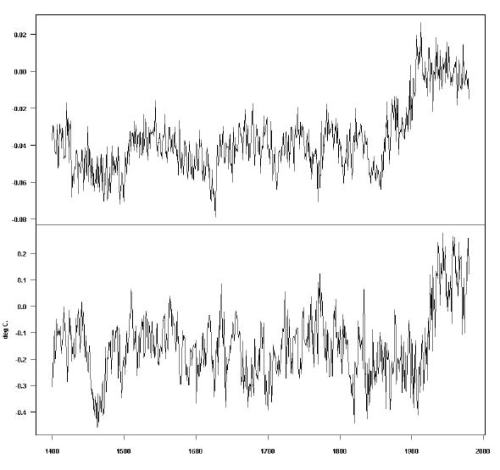

In Figure 4.1, the top panel displays PC1 simulated using the MBH98 methodology from stationary trendless red noise. The bottom panel displays the MBH98 Northern Hemisphere temperature index reconstruction.

Indeed, this appears to be an exact replica of McIntyre and McKitrick’s Fig. 1, down to use of the same “sample” PC1! Presumably, the “reproduction” is exactly the same, save for the file paths, not an independent replication. Even the same seed for random generation must have been used, so that the same “sample” PC1 (out of 10,000 pseudo-proxy series) could be identified and re-used. (If not, the exact replication of this one “sample” would indeed be hard to explain).

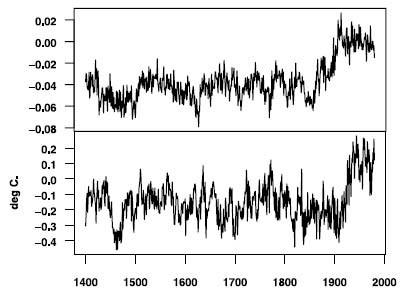

Here is M&M 2005 Fig. 1:

Figure 1. Simulated and MBH98 Hockey Stick Shaped Series. Top: Sample PC1 from Monte Carlo simulation using the procedure described in text applying MBH98 data transformation to persistent trendless red noise; Bottom: MBH98 Northern Hemisphere temperature index re-construction.

And here is Wegman et al fig. 4.1 (scaled here to match the aspect ratio of M&M fig. 1).

The purpose of this low-level mechanical “recomputation” is unclear, but it certainly does not demonstrate insight or understanding of McIntyre’s methodology. Note also the reference to “stationary trendless red noise”, which Wegman et al previously define in section 2.2 as AR1 (auto-regressive with lag of order 1).

The purpose of this low-level mechanical “recomputation” is unclear, but it certainly does not demonstrate insight or understanding of McIntyre’s methodology. Note also the reference to “stationary trendless red noise”, which Wegman et al previously define in section 2.2 as AR1 (auto-regressive with lag of order 1).

Now I’ll skip ahead to Figure 4.4, the first one that is not a direct reproduction of a corresponding figure in M&M 2005.

Figure 4.4: One of the most compelling illustrations that McIntyre and McKitrick have produced is created by feeding red noise [AR(1) with parameter = 0.2] into the MBH algorithm. The AR(1) process is a stationary process meaning that it should not exhibit any long-term trend. The MBH98 algorithm found ‘hockey stick’ trend in each of the independent replications.

Presumably, we have here 12 more PC1 samples from the same 10,000 pseudo-proxy series recomputed using McIntyre’s code. But, for the first time, Wegman et al specifically refer to these as generated via a conventional AR1 red noise model. The discussion is interesting:

Discussion: Because the red noise time series have a correlation of 0.2, some of these time series will turn upwards [or downwards] during the ‘calibration’ period and the MBH98 methodology will selectively emphasize these upturning [or downturning] time series.

Here (also for the first time), Wegman et al note that “downwards” hockey sticks are also generated; indeed, fully half of the PC1s turn down rather than up. Yet, somehow, only upward turning PC1s are shown (no fewer than 12)!

The relevant point here, though, is that the claim that AR1 “red noise” proxies generate pronounced spurious PC1 “hockey sticks” [whether turning up or down] almost 100% of the time is completely wrong, especially given the low AR parameter of 0.2. How could Wegman et al make such a huge mistake?

Part of the problem may have been McIntyre and McKitrick’s confusing nomenclature; they refer to “persistent trendless red noise”, which might lead a careless reader to assume that they meant conventional AR1 “red noise”. McIntyre also refers to the MBH98 procedure (somewhat redundantly) as “AR1 red noise”, and perhaps Wegman et al confused the two models.

MBH98 attempted to benchmark the significance level for the RE statistic using Monte Carlo simulations based on AR1 red noise with a lag coefficient of 0.2, yielding a 99% significance level of 0.0.

McIntyre’s unconventional use of the term “red noise” certainly contributed to the confusion; this may have even been done to imply a greater correspondence between the two pseudo-proxy types than actually exists. On the other hand, M&M do refer several times to “persistent” red noise, including right in the abstract. And the described procedure does not resemble an AR1 model, although it is confusingly said to generate (unqualified) “trendless red noise”:

We downloaded and collated the NOAMER tree ring site chronologies used by MBH98 from M. Mann’s FTP site and selected the 70 sites used in the AD1400 step. We calculated autocorrelation functions for all 70 series for the 1400–1980 period. For each simulation, we applied the algorithm hosking.sim … which applied a method due to Hosking [1984] to simulate trendless red noise based on the complete auto-correlation function.

The usual term for this more complex noise model, which generates series exhibiting very long-term dependencies, is fractional ARIMA (autoregressive integrative moving-average model), also referred to as ARFIMA, first described in JR Hosking’s 1981 Biometrika paper, Fractional Differencing. (ARIMA is itself an extension of the familiar ARMA models). It would have been helpful to unsophisticated readers if M&M had actually used the recognized term at some point.

In his follow up email of July 30, 2006, David Ritson elaborated on the actual procedure used by McIntyre, together with its full implications:

To facilitate a reply I attach the Auto-Correlation Function used by the M&M to generate their persistent red noise simulations for their figures shown by you in your Section 4 (this was kindly provided me by M&M on Nov 6 2004 ). The black values are the ones actually used by M&M. They derive directly from the seventy North American tree proxies, assuming the proxy values to be TREND-LESS noise.

Surely you realized that the proxies combine the signal components on which is superimposed the noise? I find it hard to believe that you would take data with obvious trends, would then directly evaluate ACFs without removing the trends, and then finally assume you had obtained results for the proxy specific noise! You will notice that the M&M inputs purport to show strong persistence out to lag-times of 350 years or beyond. Your report makes no mention of this quite improper M&M procedure used to obtain their ACFs. Neither do you provide any specification data for your own results that you contend confirm the M&M results. Relative to your Figure 4.4 you state “One of the most compelling illustrations that M&M have produced is created by feeding red noise (AR(1) with parameter = .2 into the MBH algorithm”.

In fact they used and needed the extraordinarily high persistances contained in the attatched figure to obtain their `compelling’ results. Obviously the information requested below is essential for replication and evaluation of your committee’s results. I trust you will provide it in timely fashion.

Ritson appears to suggest that Wegman et al claim to have independently generated the results in figure 4.4 using AR1 (.2) pseudo-proxies. But the truth is likely more mundane, if no less disquieting.

Wegman et al appear to have simply retrieved additional sample PC1s from their mechanical recomputation of M&M 2005, without a glimmer of understanding concerning the underlying statistical model actually used to generate the pseudo-proxies. In so doing, they missed the fundamental issue concerning McIntyre’s claim of demonstrated extreme bias via so called “persistent red noise” proxies. After all, there’s a huge difference between pseudo-proxies that have auto-correlation lags up to 350 years and an AR1 derived pseudo-proxy set!

The controversy concerning McIntyre’s pseudo-proxies continues to this day, as I discussed in my piece on McShane and Wyner. Yet, in all this time, Wegman et al have never provided the promised supporting material, or even acknowledged David Ritson’s questions about their flawed analysis.

It’s hard to imagine a more egregious and fatal analytical flaw than Wegman et al’s utter misunderstanding of the very procedure said to demonstrate the extreme bias of Mann et al’s PCA methodology. Wegman’s mischaracterisation of McIntyre’s pseudo-proxies as conventional “red noise”, along with the accompanying failure to actually analyze McIntyre’s methodology, surely ranks as one of the epic gaffes in statistical analysis. Indeed, no one can reasonably continue to claim that Wegman’s central analysis and findings hold up.

But I’m sure we’ll be told very soon that this, too , doesn’t matter.

[Note: Slight additions for clarity are shown in square brackets. ]

As I showed in Shooting the Messenger with Blanks, the hockey stick-shape temperature plot that shows modern climate considerably warmer than past climate has been verified by many scientists using different methodologies (PCA, CPS, EIV, isotopic analysis, & direct T measurements).

Consider the odds that various international scientists using quite different data and quite different data analysis techniques can all be wrong in the same way. What are the odds that a hockey stick is always the shape of the wrong answer?

Scott,

Excellent summary! A must see for anyone interested in this, and a must see for anyone who still is under the misconception that the Hockey Stick is “broken”. I know that I will be referring people to your summary. [Maybe John Cook would be interested in featuring it on his site?]

Am quite confused about this and like his procedure was statistically dubious. Did Wegman pull a fast one? How does it all square with the NRC finding in Figure 9-2 (and Appendix B) which seems to be actual red noise:

http://books.nap.edu/openbook.php?record_id=11676&page=140

Red noise is an imprecise term, unfortunately.

The NRC merely replicated McIntyre’s work (correctly). If you look at the code you will see that they too used fractional ARIMA, just like M&M, not AR1 as claimed by Wegman et al.Correction [h/t Layman Lurker]: NRC did use an AR1 noise model, assuming the default order is (1,0,0). But the AR co-efficient is very high -0.9, which would give a much higher autocorrelation than the AR1(.2) claimed by Wegman to have been used by McIntyre. This is presumably why the NRC stated that their noise model was “similar” to McIntyre’s.

phi <- 0.9;

…

b <- arima.sim(model = list(ar = phi), n);

As far as I can tell Wegman just reran M&M’s code (not an independent replication as the NRC did). But they thought the pseudo-proxies were AR1(.2) based, not high-persistence fractional ARIMA . The point is that AR1(.2) pseudo-proxies would have demonstrated considerably less bias in the PC1s (although undoubtedly some would be seen). And of course this flaw in the central analysis strikes at the very credibility of the Wegman report.

> Did Wegman pull a fast one?

Probably not originally. But when Ritson started asking questions, they must have realized what had happened, and started stonewalling, as a proper answer would have made their incompetence to do a proper replication obvious for all to see.

This makes a lot more sense. Thanks to DC, Gavin’s Pussycat, and Layman Lurker. “Very red” noise like this seems like it could be really pernicious.

I think the “all showing up” is a pretty minor issue. there are a lot of things wrong and like you said we have to take them one at a time. I remember grimacing a little at the time, noticing the none showing down. I think just a more prominent mention would have been best (like within the figure caption). Actually in a way, displaying them all up is probably best for a display purpose, since the algorithm flips them regardless and so for the human brain to process apparent similarity, having some of them up and some down would be a distraction.

Actually if somehow, they are getting all of the HS’s pointing in one direction (not 50-50) that would be a sign of something wrong…

The “upwards” and “downwards” refer to the pseudoproxy series, not to the PC1s. PCs are only unique up to orientation, so it doesn’t make any sense to distinguish upwards from downwards PC1s.

What I never found clear in this argument is whether “the MBH algorithm” refers to:

(a) the algorithm in MBH98 for calculating a temperature reconstruction, or

(b) the proxy PCA step with calibration period centring.

But the PC1s will tend to also assume (“selectively emphasize”) an upward or downward shape, depending on the underlying pseudo-proxy data, unless I am missing something. I don’t see why all the PC1s must be only one orientation. In fact, M&M fig. 2 (Wegman 4.2) shows that half of the generated PC1s are upward turning and the other half are downward turning (i.e. their mean over 1902-1980 is more than 1 standard deviation *below* the 1400-1980 mean.

On “the MBH algorithm”, Wegman et al are not entirely clear either. But implicitly it concerns the proxy sub-network PCA, specifically the dimensionality reduction of the 70 North American tree ring series available back to 1400.

The first principal component is the linear combination with the greatest possible variance.

But var(–PC1) = var(PC1), so both PC1 and –PC1 have maximum variance and one is as good as the other. Which one you get depends on your software — the same set of data could give you opposite orientations in two different programs, and both would be equally correct. Normal practice is to choose the orientation that makes interpretation easiest.

One thing you might be missing is that if the set of pseudoproxies contains upwards slopers and downwards slopers, you’ll end up with positive coefficients on the upwards ones and negative coefficients on the downwards ones (or visa versa). You can flip the orientation of any number of the pseudoproxies without affecting the PC1 (you may get some yammering about things being “upside down” from McI though!).

Your point is valid since the ultimate regression in a CFR-type reconstruction does not assume a sign of correlation a priori.

But M&M’s running text (and the histogram in Fig 2) refers to equal numbers of upward and downward hockey sticks. Rather than explain that this is irrelevant to how the PC is used in the final reconstruction, M&M (and by extension Wegman) simply elected to show only upward bending hockey sticks, creating a more compelling visual effect.

DC, the sign of PC1 is simply not relevant. For example, when you run a slightly modified NAS code to return a single simulation and PC1 (as I have done here), you will get just as many negative PC’s as positive. If the sign is not relevant then you can arbitrarily orient the sign whichever way makes sense.

From NAS:

LL,

I think we’re having a “violent agreement” here. I agree the sign is irrelevant, as Pete said. However, that should at least have been explained properly by M&M and Wegman, not glossed over.

Even one of Wegman’s expert ad hoc “reviewers”, Noel Cressie, agreed that both orientations should have been shown:

Click to access cressie-email-2006-07-18-with-attachment.pdf

Of course, Cressie’s one and only set of written comments was sent a few days *after* the report was submitted, although that didn’t stop Wegman from naming him as one of the reviewers. (Yes, the supposed “peer review” is another big issue – one thing at a time).

Yes, “violent agreement” about the sign relevance. The presentation of an aribitrary sign is not improper or misleading. An explanation (ala NAS for figure 9-2) would have been ideal but certainly not of great importance.

When I learnt PCA we used a set of sparrow measurements as an example. We got two significant PCs, interpreted as “size” and “shape”. If you did simulation studies, you’d get 50/50 “size” and “–size” as the PC1. Obviously you’d want to flip the orientation of the negative PCs. Personally I’d briefly note I’d done so, but if you assume good faith from the author that’s not strictly necessary (because it’s obvious what they’ve done).

Of course McI gets no assumption of good faith from me until he posts a corrected version of his mixed-effects model post that TCO and I proved was erroneous.

I don’t have a big problem with McI making a math mistake. I have a HUGE problem with him erasing the evidence. that should be up there foreever and the thread unlocked. He can take his time, saving the analysis. But leave the screwup front and center. He enjoyed the PR and debate diversion while it was up there (like from Lucia). Well he can take the bitter with the sweet.

DC,

I suspect that this revelation, if correct (I am honestly not qualified to make a judgment call, hence my caveat), will have 3M (McIntyre, McKitrick and Morano) seeing red 😉 That, or they will continue attacking scientists to try and distract people from yet another inconvenient truth.

One has to wonder how much longer 3M can keep up this charade.

> AR1 (auto-regressive with lag-1)

Actually order-1 autoregressive (meaning indeed that, in addition to the variance, you only have to specify one autocorrelation, typically the one for lag 1)

[DC: Corrected. Thanks. ]

Didn’t Von Storch & Zorita’s comment to M&M already show they were wrong (or overreaching)? Didn’t the Wegman report have that comment in their reference list? Didn’t they NOT refer to that paper at all in the actual text?

Correct on all three counts. The same applies to the Huybers comment. And the dismissal of Wahl and Ammann completes the trifecta of peer-reviewed commentary on M&M *not* discussed in the Wegman report.

I tried probing on a bunch of these issues several years back (both with MnM themselves and with places where the Wegman repetition seemed to not really show clear understanding or clear description of the simulations). You can go look back in the Neverending Audit for them. I was met with a lot of resistance, rather than frank answers. I hate that. If someone comes to check your math/logic, you should be glad (even if you hold your breath that there might be an issue…you should still be a man and let people pressure test your NPV-DCF valuation and look for little glitches in the Excel. 😉

I kinda lost heart to just keep pinning people down on this c**p. At the end of the day, you are talking about a pretty c****y penny-stock operator who’s companies have just burned capital and never created returns for shareholders or economic value in terms of useful mining development. And then the fakesy sea lawyer Latin.

[DC: Edit – let’s cool it with the personal insults. Thanks! ]

It’s even funny how people somewhat sympathetic to probing of Mann and to “skepticism” (Huybers, Burger, Zorita, me (not that I have the cred of the first 3) have found McI evasive and refusing to asnwer questions.

The whole thing has gone on for years and is just kind of trashy. We’ve had years for putup or shutup and we don’t have papers and instead have years and years of blog posts and even all the c**p from Watts (and McI and Watts are real thick with each other).

The whole thing is just lame.

“If someone comes to check your math/logic, you should be glad (even if you hold your breath that there might be an issue…you should still be a man and let people pressure test your NPV-DCF valuation and look for little glitches in the Excel”.

Someone should have brought this up during the years Mann was stonewalling requests to see his data and code.

Yammering? I would call it more like bleating like a baby lamb after it’s mother. He never shuts up about it.

Long time CA followers may know that Steve McIntyre discussed Ritson’s questions back in August 2006.

In that post, McIntyre stated:

As we’ve seen above, the NAS confirmed the effect for AR1(.9), not AR1 (.2). And Wegman simply mislabelled the M&M results as AR1(.2), when they were based on ARFIMA. This is crystal clear in Wegman’s caption for 4.4, as noted above. [Corrected – h/t Layman Lurker].

The discrepancy was noted by Ritson in his first email to Wegman report authors. How could McIntyre miss that? After all, he quoted from that same email in the same post. McIntyre discusses Ritson’s “first claim” which is question 4 from Ritson’s email.

Here’s one of Ritson’s questions to Wegman that McIntyre skipped over:

Ritson’s second email to Wegman et al is even more explicit, actually quoting the Wegman Fig 4.4 caption. Again the email was quoted by McIntyre, but this part was omitted:

So … Wegman claimed that McIntyre had demonstrated the same strong bias effect of short-centred PCA with AR1 as with ARFIMA pseudo-proxies, while McIntyre claimed that it was Wegman who had demonstrated it!

You can’t make this stuff up.

I appears to me that the NAS code specifies a model with a single autoregressive term or ARIMA(1,0,0) which is the same as AR(1). Am I missing something?

[DC: Yes, you’re right, assuming the order defaults to arima(1,0,0) with the passing of the single AR parameter (which makes sense). It is worth noting, though, that an extremely high AR term was used (0.9), so this is presumably why the NRC stated that their noise model was “similar” to McIntyre’s. I’ve made the appropriate corrections above. It is reasonable to presume that AR1(.2) (the Wegman claim) would give a different result from the much more aggressive AR1(.9).

Thanks for the correction.]

The “effect applies” but the extent is not the same. McI has a long habit of exaggerating the impact and resisting clear disaggregation to show what changes drive what results in the MBH algorithm.

It’s similar to what he did with the “short-centering” (imo “wrong”) versus normal centering. What he did there was ALSO change a standard deviation dividing (a pretty normal practice, at least debatable on it’s own) and actually this drives the extent of impact, much more than the MBG 1900s short centering. This was the subject of the Huybers comment.

There is just a long pattern of the guy exaggerating impacts and resisting clear issue analysis. This bugs me as it is a pretty sophomoric debate trick, and also it just shows a lack of science/math curiosity (look in contrast to how Burger runs a full factorial).

It’s always about exaggerating the impact of short-centering.

1. Confusing “the hockey stick” (overall recon) versus PC1

2. signal retained in noise model red noise (“full ACF”)

3. standard devation dividing mixed in as a change

We’ve been going round on this stuff for years (literally) now. It’s like the MMH paper where they spent years and still made stupid errors and occluded clear issue analysis with a binch of linear algebra gobbledigook and played stealth burden of proof shifting games. It’s just so damned NOT value add.

P.s. Redskins got 4 picks. Go D-Hall for DPOTW.

In the “take a Ritalin, Dave” thread, Ross McKitrick’s comment caught my attention, in particular:

> I remember the series of increasingly accusatory inquiries we got from Ritson. [W]e got tired of the increasingly accusatory tone of the correspondence and said that if he had a substantive point to make he should write it down and send it to GRL as a comment.

Source: http://climateaudit.org/2006/08/31/do-climate-scientists-need-ritalin/#comment-62269

McKitrick’s reaction seems quite normal and justified, out of a sudden.

TCO,

Maybe there’s something I should disagree with, but I haven’t found it yet. Yes, I think short-centered PCA was wrong. And, yes, its bias effect was greatly exaggerated by McIntyre, in all the ways you mention.

Willard,

Well I have no idea what the correspondence between M&M and Ritson was all about.

But I do know that the questions asked by Ritson to Wegman were very relevant and deserved to be answered. I would think you would find it interesting that McIntyre steered away from discussing the questions that might show that Wegman had really messed up. But then again we both know McIntyre is the king of selective quoting, don’t we?

I actually (I think, been long, and am too lazy to research it) raised some of the same questions Ritson had independantly (to Steve and he blew them off). The thing about the two recon graphss looking identical despite a rational that they should be (or a statment that they looked different, when I couldn’s see any difference). Ritson’s questions are just common sense questions trying to assess the work. If there was/is an explanation, Wegman should have given it (and given it was in discussion at Steve’s and was right in Steve’s area of interest, he is dilinquent for not adressing…I see him avoinding this as a form of dishonesty…like walking past a problem and pretending not to see it.)

It’s been frikking 4 years. And we are stilll jerking around with this stuff?

The shark has not just been jumped, it’s been tap-danced on top of til it died of starvation and overteasing.

Sounds a lot like Judith Curry’s recent complaints about the “mean girls” over people at RC and elsewhere beating her up over substantial errors in fact, which she continuously mischaracterizes as her getting beat up because she exposes “uncomfortable truths” (climate models are tuned to the temperature record, when anyone who’s looked into models like GISS Model E knows this is false, etc).

If you can’t argue substance, accuse them of being mean …

Dhogaza, you are mean.

Neven,

Loved your sea ice page this past melt season! Dhogaza might be terse and abrupt/curt, but s/he does make some awfully good and pertinent points. Actually, dhogaza represents the very anti-thesis to the mealy-mouthed diatribes of certain people who like to Curry favour with the ‘skeptics’ 😉

That all said, things do seem to be unraveling rather quickly for 3M, don’t they? One has to wonder how they managed to deceive the world for so long…

Neven, I don’t think I’m mean, I think I’m documenting a meme.

Creationists make the same play against evolutionary biologists, and for the same reason.

Curry is welcoming her new reputation, there’s no reason at all for me to feel bad about making what she does crystal clear, in her own (paraphrased, but accurate) words.

If she doesn’t want to equate solid rebuttal to her incorrect points as being “mean [high school] girl” attacks, well, she doesn’t have to make that claim, does she?

And after making it, how is repeating it “mean”?

I can’t argue the substance, so I just accuse you of being mean. 😉

I forgot the smiley. In other words, I agree with you completely.

I think Neven was just making a joke.

Yeah, I get it now … Neven, you’re mean, too! 🙂

DC, I’m impressed. You’re shining bright statistical light on what had been a dim topic, the calculations behind the accusations of Wegman’s shoddy report.

Let’s get back on topic, and look at the term “red noise” (77,200 Google hits).

Now consider the phrases:

“persistent red noise” (3280 Google hits)

“trendless red noise” (447 Google hits)

“persistent trendless red noise” (4 Google hits)

The question is how many of these latter hits are for references unrelated to McIntyre and McKitrick’s GRL paper and related analysis? (Although, I suppose the answer is obvious for that last combo phrase!)

Let me get this straight, ARFIMA(1), because it introduces long term persistence would not not be “trendless”. Is this a correct understanding of the argument?

If you assume no trend where there is one, and then estimate autocorrelations for your ARFIMA model, those autocorrelations contain information about the trend.

So if you start with proxies that contain a hockey-stick signal, estimate ARFIMA coefficients based on a no-signal assumption, and then run simulations based on those estimates, you’re going to recover that hockey-stick signal.

Yep, that was the basic criticism of the MM critique on this basis. Glad I got it right. Not as dumb as I thought I was.

If you want to go back to memory lane, you can see me trying to get Steve to explain his noise model. He’s pretty resistive to scrutiny.

Here’s the way I understand it (and I’ll let Pete correct me as necessary; goodness knows, he’s done the same for McIntyre often enough). Normal paleoclimatological practice is to assume that proxies can be modelled as a combination of signal and noise. The noise is modelled as autoregressive model of order one with a moderate parameter (Mann et al 2008 used AR1 (.24) and AR1(.4) for example). Then the skill of reconstruction is evaluated by comparing model performance on the real proxies compared with pseudo-proxies generated as, say, AR1(.4).

The M&M method appears to be to assume the proxies are *all* noise and estimate the parameter that way, proxy by proxy, which will give a much higher AR1 factor in general.

Then, in GRL 2005, they go one better and assume that each proxy’s noise structure should be modeled with ARFIMA, which would include the auto-regressive parameter (AR), plus possibly MA (moving average, also order one) and FI (fractional integration). The order of this last model element can take non-integral values, say between 0 and 1 or between 1 and 2, which enables more precise specification (otherwise the model tends to blow up). The end result is a noise model with much more “persistence” or “long memory”.

Even if you use just AR1, the parameter will make a big difference. This makes intuitive sense when you realize that the factor specifies how much auto-correlation there should be (i.e. how much the value at time t depends on the previous value at t-1).

Ritson gives a simple formula for estimating the short-term persistence of AR1 red noise: (1 + rho) / (1 – rho). For 0.2, this gives 1.2 /0.8 = 1.5 years. For 0.9, this gives 1.9/0.1 = 19 years: big difference. But ARFIMA (which is normally not called “red noise” in standard parlance, the point I was making in the beginning), can give persistence as high in 350 years in the pseudo-proxies generated by M&M (again, according to Ritson in his email to Wegman).

I can’t tell for sure which noise model is most appropriate. What really bothered me was that McI tried to basically use a more extreme case, with less extreme wording (the whole semantics around calling it red noise). A REAL curious intellect would want to look at AHS as a function of degree of redness and would WANT to shine a light on the extent of redness used.

Just a memory from the Neverending Audit. There was a guy called “Chefen”, who looked at trying to model the overal recent temp record to prove that it was just a random walk. He got some play on CA and referalls. Note, Steve did not endorse his method (he’s cagy that way), but he didn’t thoughtfully criticize it either.

I went over to Chefen’s blog and started asking my questions. Chefen was pretty irked at the scrutiny. He gave me some blasts for not knowing some of the mathematics and terminology (and I admit I don’t, the funny thing is you can still ask relevant questions). It’s often the case, that when you ask a good question, the first response is pushback* rather than consideration of the concept.

Anyhow, I just kept asking my “dumb questions”. Guess what? The guy was specifying the mean of the series and an AR paramater, but ALSO, he was specifying the START POINT of the series. Guess what? If you specify both the average AND the start of a TRENDING series, you are specifying the TREND! So his whole claim that AR1 produced the recent temp increases was wrong. He finally realized it and admitted that I had pointed it out to him. He did say that he would keep looking into the problem and try to “save” his analyses. but he never did (nor did he come back and say it was unsaveable, to really complete the loop and correct himself). What he did eventually was close the blog and disappear it (it was part of the Sir Humphrey collective). I have a problem with this, since he showed the in progress work, got a lot of hoi polloi excited, had a PR impact, etc. He should have really withdrawn his work. And left it up to show his mistake. This is one of the reasons, why I really don’t like blogging as a method of communication. It’s not archived and serves more to excite the home team than to really be a record of thought and discussion.

* Look at Lucia with her noise model for recent temp decline or Mike with having to be asked 3 times in a row about the Ritson differencing (Mike was in fact mistaken and I was happy to see some of his side, keep after him til he realized it.), but often evnetually the person gets a niggling that they may have a weakness and goes back and finds it. This is what happened with Chefen (by the way, I don’t agree with the instinctive pushback, see this as an emotional and defensive response rather than rational/fair/curious but no matter…at least better to be eventually amenable to reason rather than never reachable (Watts, Id, Goddard). Or to be more precise, you can think of it as a spectrum. But the really good guys like Jollife and Zorita are much readier to at least consider they might have a problem, rather than be defensive.

There seems to be two distinct meanings for the term pseudoproxy.

It’s mostly used in simulation studies. In that case you want to evaluate your method against reasonable simulated data. You build your pseudoproxy out of both signal and noise, and both signal and noise contribute to the autocorrelation of the pseudoproxy. You need to use a smaller coefficient for your noise, so that the combined autocorrelation of the pseudoproxy is realistic.

The term is also sometimes used for hypothesis testing. In this case you set up a null hypothesis that the proxies contain no signal. Now your pseudoproxies are entirely noise, and so your AR1 coefficient will need to be larger in order to “explain” the empirical autocorrelation.

MM05 showed that the MBH centring convention promotes hockey-stick shapes to the first PC. It will promote both hockey-stick shaped noise and hockey-stick shaped signal to the first PC. If there is a sufficiently strong hockey-stick shaped signal, we can detect it by showing that the “hockey-stick index” from the proxies is unusually large compared to those from AR1 noise.

If you use a “persistent trendless red noise” ARFIMA model, you get hundreds of parameters to overfit. This will completely destroy the statistical power of your test. That said, MM05 don’t even bother to do that test.

> you’re going to recover that hockey-stick signal

Pete, not necessarily… even in this case, just most of it.

An analogy would be with outlier detection in simple linear regression. Say you have n à points on a straight line, one of which contains a gross error just over three sigma. If you do a three sigma test of the outlier against a linear fit through the remaining n-1 points, you may find a rejection, whereas including the point, you may not. This is assuming n is fairly small.

I should have said “the hockey-stickiness of the signal” or something similar. With hundreds of acf parameters to overfit you’re going to keep a lot of information.

I’m afraid I’ve missed the point of your analogy, but I do love robust regression so please continue!

If you look at this comment from Steve, “Be careful what you think that you’re understanding. We ran AR1, but the illustration in MM05a is from ARFIMA which is not one coefficient. Wegman and NAS ran one coefficient. If you look at the post on the Ritson Coefficient, you’ll find information on AR1 methods.”, he says Wegman ran one coefficient. but it seems rather unlikely that Wegman DID, since the resultant graph was so similar to what McI got from “full ACF”.

You would think superSteve would notice this. Anyhow, I raised that issue (as did Ritson) and Steve just ignored it.

Yep. It’s like I said above, McIntyre kept saying Wegman showed hockey sticks from AR1, while Wegman said Steve showed it. I want to tell them, “Stop. You’re both wrong”.

I’m amazed how often McIntyre repeated this. But surely now he realizes how foolish the assertion was – maybe that’s why he’s changed the subject abruptly from Wegman all of a sudden.

TCO, you were asking the right questions. And you’re right – there’s no way those hockey sticks in Wegman fig 4.4 would have been produced as the PC1 from AR1(.2) pseudo-proxies. Soon, I’ll be showing exactly where they did come from … in a day or two.

We also have Bender backing up Steve on Wegman’s supposed use of AR1:

At 4:49pm I pointed out the use of AR(1) in Wegman Fig 4-4.

This part doesn’t read very clearly. It sounds like you’re saying that 100% is wrong because 50% turn downwards.

With the claimed AR1(.2), virtually none (0.2%) of the PC1s have a “hockey-stick index” greater than McI’s arbitrary cutoff at 1 (MBH’s ITRDB PC1 has as HSI of 1.6).

With realistic AR1(.4), only about 21% exceed 1, and still none exceed 1.6 (in 1000 simulations using the NAS code the largest I got was 1.3).

To get as strong a hockey-stick as the real data you need either AR1(.9) (NAS) or ARFIMA (Wegman, MM).

What I meant was this (i.e. I should have moved away explicitly from the up-down issue, rather than implicitly):

The relevant point here, though, is that the claim that AR1 “red noise” proxies generate pronounced spurious PC1 “hockey sticks” almost 100% of the time (whether bending up or down) is completely wrong, especially given the low AR parameter of 0.2. How could Wegman et al make such a huge mistake?

My intuition was that you couldn’t come close to such large numbers of pronounced hockey sticks (|hsi| > 1) from moderate or low AR1 pseudoproxies. I still can’t understand why Wegman thought otherwise. Or McIntyre for that matter.

We still do not know who actually put together WR section 4… quite possibly, Said did most of the work.

> Since Chefen has brought this figure [MBH fig. 7] into play, there’s much to consider about it.

Source: http://climateaudit.org/2006/05/29/mbh98-figure-7-2/

I know that McIntyre has cited many times Wegman (and NAS) as supporting the short-centering bias effect producing hockeysticks from AR1 “red noise”.

But the latest I can find is September 2008:

Ross, the difference is just in the simulation algorithm. The hosking.sim algorithm uses the entire ACF and people have worried that this method may have incorporated a “signal” into the simulations. It’s not something that bothered Wegman or NAS, since the effect also holds for AR1, but Wahl and Ammann throw it up as a spitball. Fracdiff is a 3-parameter simulation and is a cleaner simulation that avoids the spitball.

If people criticize McI for using a noise model that EXAGGERATES the AHS, it is not a valid defense to say that the AHS still EXISTS with a different noise model. He is playing a (very high school) debate game there. I’m quite OK with knowing that AHS occurs anyway. That does not mean that McI was not wrong in his noise model, and that he exaggerated his opponent’s flaw. Oh…and resisted scrutiny.

Deepie:

Huybers did some work using the MM code. See his comment and also supplemental materials. Huybers did tests both on the proxies themselves and on noise series. Don’t recall if he used the ACF variation or the AR1 variation. You could dig into it I guess. Or could just run the different variations yourself, I guess, and see what the difference is. Maybe write another comment on MM (or maybe this was the rejected Ritson comment’s point).

I think if you accept that short centering is not kosher (and I beleive you do), that just looking at the tests on the proxy series themselves, is enough to show an exaggeration (looking at short centered versus normal centered). Of course, mcI mixed in the standard devation dividing issue (changing two variables at once, which Huybers raps him on the knuckles for). But even cutting that out (why, oh, why, does my side overreach?), you see larger HS in PC1 just from looking at standard versus Mannian normalizations.

P.s. I can’t beleive we are still kerking around with this stuff years later…

“… it is not a valid defense to say that the AHS still EXISTS with a different noise model.”

Especially when it doesn’t for a particular noise model that McIntyre claimed. That’s the point.

It doesn’t exist at ALL, or was essaggerated in how big it was?

I’m using hsi of 1. ARFIMA produced close to 100% hockey sticks.

McIntyre keeps claiming Wegman demonstrated the “effect” with AR1, and Wegman specifically claimed a strong effect for AR1(1.2).

According to pete,

So that’s one definition of lack of effect. [0% instead of 100%!]

More generally, I take pete’s point to show that the “real proxies” hockey stick PC1 is well above the significance level of the AR1 (.2) or even AR1(.4) pseudoproxy PC1s. You need an unrealistically high AR1 parameter to demonstrate that claimed effect (lack of significance) for the MBH PC1. After all, McIntyre’s claim is that it is “spurious”.

Of course, if you mean that the pseudoproxy or real proxy PC1 will be “more” of a hockeystick under short-centred PCA, then, sure, there is always some effect. But that’s a much weaker claim than McIntyre is making.

IIRC, the NAS code does not transform the data ala MBH98 or MM05. From MM05:

According to MM05 this has a very significant impact on the proportion of series which a 1 sigma blade occurs:

I have only been into R for a few months though, so somebody better check my reading of the code.

The NRC code defines the baseline as the last 100 points of the 600 long pseudo-proxies.

Then, here a pseudoproxy is generated and recentered on the baseline.

IOW, there is an effect, just a very small one.

TCO,

Rereading the comments on M&M 2005 (two published and two not), it seems quite clear that no one at that time understood exactly what M&M did. M&M are primarily responsible for the confusion, as they did not clearly describe the derivation of their empirical ACFs (auto-correlation functions) via ARFIMA fitting. (And that’s undeniable – even Wegman et al did not understand it, and they were supposed to be statistical experts).

So one can hardly blame Ritson for only figuring it out later, once McIntyre was pressed for the exact ACFs used. Unfortunately, this means that the comments (and replies) as they stand do not address this all-important issue.

As far as I know the only paper that does (or at least comes close) is Amman and Wahl (2007).

Click to access Ammann_ClimChange2007.pdf

DC,

I would like to know if this discussion can be related to your current endeavours:

Readers should be able to find there soundbites for the actual discussion around tone and preferred narratives.

From a technical point of view, it’s not as related as you might think, although it involves the same players.

Recall that above “pete” mentioned the two uses of pseudo-proxies – one to validate reconstructions by comparing to a set of benchmark “reconstructions” based on pure noise pseudo-proxies, as discussed in this thread.

But McIntyre and Ritson are discussing the *other* use of pseudo-proxies – to test reconstruction *methodologies*, rather than to validate an actual reconstruction. In that case, a lower amount of red noise is added to a temperature signal obtained from a climate model simulation of past temperature (or other climate variable). To the extent that the methodology can “recover” (or not) the simulated temperature history, that is taken as evidence of its worth and characteristics.

The important point is that these pseudo-proxies are created by adding a *small* amount of red noise to a simulated climate “signal”. Since the simulated temperature series has some degree of autocorrelation already, presumably the resultant pseudo-proxy will reflect that. But McIntyre appears to be advocating that a much larger amount (i.e. the complete “red noise” found in actual proxies) should be added.

I’m not sure how Ritson derived his AR1 parameter for this added red noise, but I think McIntyre misunderstood his point. I’m not really that interested in pursuing it, though.

Here is the RC article that sparked all the fuss:

http://www.realclimate.org/index.php/archives/2006/05/how-red-are-my-proxies/

Ritson had a booboo in that RC article. Mike said he didn’t (like 2 or 3 times in a row). EVen people on the RC side, were calling it out and finally Mike acknowledged it. Albiet with a very opaque statement that did NOT say “my mistake”. Felt very good to see his own side challenge Mike though. Bad that Mike instincitvely backs himself up, rather than listening when people bring something up. That’s why it had to be brought up several times….

Not so fast …

TCO, did you read the supplementary article that Ritson referenced, where he explains that he is trying to decompose the red noise from low-frequency signal in the real-world proxies?

Click to access nred.pdf

It still looks to me as if McIntyre misunderstood the point.

watcha bet Steve is reading this thread, planning his stormy CA post, full of sealawyer Latin and snark and putdowns? I hope he gets that mistake on the MMH fixed first. Or just puts back up the evidence and unlocks the thread….;)

i was talking about the differencing stuff that Mike at first said, no way…but then it turns out that it was differencing.

No, I didn’t read it. Cripes. This stuff is so yesterday…

So it’s not that Ritson had a boo-boo, it’s that Mike Mann appears not to have understood how Ritson got his result. Ultimately, I suppose the proof of Ritson’s method would be in the final result – would the resulting pseudo-proxy be realistic in terms of its combined noise structure?

Von Storch’s use of AR1(0.7) red noise to be *added* to simulated temperature signal is almost certainly incorrect, and Ritson was much closer to the mark. So where’s Ritson’s boo-boo, pray tell?

You get one more kick at this, and then let’s move on, OK?

I know for sure that Mike was incorrect regarding Ritson, that he DID difference (this despited people asking atbout it TWICE, even people on Mike’s “side”). And then when he did fess up, he did so in a bizarre oblique method. Not “I was wrong”.

Whether Ritson was RIGHT is a different issue. My impression is not, but I’m not an expert. you’re right, to really dig into that you would have to look at it separately. Given people on even your side were concerned that the differencing was a logical error, my suspiction is that Ritson was wrong here. That doesn’t mean he is wrong at everything (something about Internet debates seems to make people think people are always wrong if they have one error). For instance, going after WEgman’s methods, trying to figure out the ACF subroutine, etc. were good activities. I bet (bet is different than assure) if we look at the specifics of the Ritson red proxies RC post, that the differencing was a mistake, at least what inferences were made using that.

I think that he (Mann) might, just might has misunderstood something. He (Mann) did not use differencing. This is what I got out of reading the exchanges. Ritson did not make a boo boo, he used a different method from Mann.

“I bet (bet is different than assure) if we look at the specifics of the Ritson red proxies RC post, that the differencing was a mistake, at least what inferences were made using that.”

Just so long as we understand that the “differencing” was a deliberate part of Ritson’s method for estimating the right magnitude of the noise component to add to simulation climate series to create realistic pseudoproxies. Asserting that this was “mistake” means asserting that the *method* was mistaken, or gave erroneous results, not that there was a mistake in his calculations. To show this, you have to show that the resultant pseudoproxies were not realistic. I don’t see any evidence of that, whereas von Storch’s original method gave highly unrealistic results.

I see at comments #20 and #22, Mike Mann corrects his misunderstanding of the Ritson technique, although perhaps he fails to be sufficiently grovelling in admitting that he had misunderstood the method in his original comments. Sorry, I don’t see this as a major problem.

http://www.realclimate.org/index.php/archives/2006/05/how-red-are-my-proxies/#comment-13882

McIntyre, on the other hand, said Ritson was “nuts” because he estimated AR1 parameter of 0.15, instead of the actual full AR1 “red noise” parameter of 0.4 present in the proxies. So McIntyre did not understand Ritson’s procedure nor its purpose. And never corrected himself. Maybe he still doesn’t understand it.

And with that we are done. I hope that’s enough for Willard. [Edited for completeness.]

It’s not the grovelling, it’s the clarity. Since he said one thing at one time, he ought to be extremely explicit so people undersstand that he is correcting a previous error. As he writes now, it is hard to even tell what he means. I find this a trait of his by the way.

On the differecincing, yeah, I GET that Ritson was trying to estimate the autocorrelated noise structure of the series as separate from any signal. The question is if his method is appropriate, how it handles medium level autocorrelation SIGNAL (which we know exists cf. ENSO), why he didn’t use some conventional method, etc.

Why knows, maybe his method was best. I suspect not…

[DC: OK so we’ve gone from Ritson made a boo-boo, to Mike Mann wasn’t clear enough in admitting a previous mistake (about Ritson’s method). That’s progress I suppose.

I know you get why Ritson used differencing. But what about McIntyre? Either he didn’t get it, or he did and was trying to score rehtorical points by a ridiculous red herring. Not good either way. And I didn’t notice McIntyre pointing to a “conventional method” to estimate the true noise component.

If you read the comments carefully, it’s pretty clear that Ritson’s method gives fairly realistic results, compared to von Storch’s wildly high ad hoc noise estimate. It also seems reading further that Mike Mann didn’t feel differencing was necessary, giving a higher estimate of the noise factor. But even so the difference in the final result is not that different between Mann and Ritson.

Now this is truly the end. I’m getting tired and I’m sure I’m not the only one. You know I have time for your comments, but it’s also good to know when to stop. Thanks! ]

It seems to me that the Ritson approach, or something like it, is more likely on the correct path than the MM approach (via subterfuge) of incorporating the signal into his purported “red noise” model.

Mann stated that he did not use differencing in his method of estimating the noise component. It seems that he made a mistake in his interpretation of the question, oh, well. It is a blog, not a paper.

Well, that’s part of the reason I didn’t want to go down that road, because the RC Ritson differencing and “red noise” post really concerns the “other” use of pseudo-proxies. Anyway, my take on it after reading all the comments is you have Ritson and Mann both agreeing that for that particular application you need to estimate the noise component, but Mann used a different method (as you say, he didn’t agree with Ritson’s differencing).

[DC: Nope – enough is enough. We’ve both had our say. Someone else’s turn, hopefully more on topic. Thanks!]

DC,

Thank you for the clarification. Sorry to have made TCO do it.

It seems to me that discussing how simulation and hypothesis testing are related to each other would help bind all this stuff together.

At the very least, there should be one and only one place to keep track of everything one needs to know about this stuff, which seems to me at the very core of the theorical debate that is going on. In all fairness, this criticism also holds for the peerreviewedlitterchur. We should expect the web to solve this.

Blogs are not enough for that. We should build micro-sites. Something like:

http://www.woodfortrees.org

PS: Do you plan to talk about M&M’s reply to Ritson?

Willard,

You asked:

“PS: Do you plan to talk about M&M’s reply to Ritson?”

Do you mean M&M’s unpublished reply to Ritson’s unpublished comment on the GRL comment? Or do you mean McIntyre’s “Ritalin” post?

I was thinking about the paper:

Click to access ritson.reply.258826.pdf

This paper is cited by the “take a Ritalin, Dave” post.

willard,

Yep…

Non-stationary processes have deterministic trends or stochastic trends (unit root processes e.g. random walk). AR, ARMA, and ARFIMA models assume (require) that the process being modelled *is stationary*. In standard time series analysis, a deterministically trending series will have the trend subtracted, and a stochastically trending series will be differenced (this is called ARIMA) to make it approximately stationary, before estimating the model order and parameters. Steve shows that applying differencing to a synthetic series which *is* stationary will result in underestimating the AR coefficients (‘over-differencing’). Ritson’s method applies a (somewhat convoluted) differencing, so Steve shows the obvious… it underestimates the AR coefficients of a synthetic purely (stationary) AR process. This is why tests for stationarity are applied to series *before* doing (or not) any differencing or trend subtracting. The question is… are hockey-stick series stationary or not? If not, Ritson’s method may be ok, and is certainly better that simply estimating the AR coefficient from non-stationary series ala Steve. If they are stationary, Riston is wrong, Steve is right. UC summarized it quite well…

In standard ARMA time series analysis…

1) Determine whether the series is stationary

2) If not, make it stationary

3) Estimate the model order

4) Estimate coefficients

5) Run diagnostics

In the GRL paper, M&M missed out 1, 2, 3, and 5.

Wegman didn’t (ought to have) pick up on this.

‘Coz if the series are non-stationary, at minimum the AR and ARFIMA models used by M&M are mis-specified… and this will result in the autocorrelations of the simulated series being too high… which will effect Monte-Carlo benchmarks used in M&M, and may effect their finding of lack of significance in MBH98.

Phew.

(If I’m allowed back in from “time out”) EZ pointed out the same thing Lazar is mentioning, back in the discussion in 2006 and pointed out the trickiness of trying to develop a complex noise model from the proxies themselves, given they may (or may not) contain signal. Probably there is some way to look at this in terms of “bounding” the problem (i.e. do it the Ritson way and the Steve way and see what those extrema are).

Also, would think (with my simple brute force peabrain) that analysis of the period of overlap of insturmental temp and of trees, would allow some analysis of the noise structure (since here you actually can tell what the signal is, and of course the signal has autocorrelation, we know ENSO for instance.)

> that analysis of the period of overlap of insturmental temp and of trees, would allow some analysis of the noise structure

Yep… actually I couldn’t think of any other way. But then you use the same instrumental data to calibrate against. You should be very careful to avoid double counting

Also Steve (way back in some Neverending Audit post, not sure where, really hard to track anything down) wrote up some very simple time series examples showing what an AR1, AR11, trend, white noise only, trend with noise, etc. looked like. So he must at least some time have considered the issue of co-existence of noise and signal. Consequently his evasion on discussing the implications of “full ACF” seems dishonest. If he were really the kind of person who gets jazzed with thinking about things, he would want to dig into it. Even if it “hurts him”. that’s how curious people are. They love the aha. But he seems to only love it when it hits Mann or helps him. I just don’t grok that. 😦

TCO,

… correlating proxy and temperature series and looking at the error terms? or something else?

The former

It might be useful to recap the “red noise” discussion in a way that will hopefully tie back (eventually) to the use of ARFIMA derived pseudoproxies in M&M GRL 2005.

First, we should keep in mind that the discussions referred to (and I’ll list them together in a moment), concerned the correct way to generate synthetic pseudo-proxies, given a specified temperature history, in order to test a paleoclimatology methodology’s ability to recover that signal. To do this, one must model the relationship between signal and noise in real-world proxies. The answer will not necessarily transfer directly to the problem of constructing random pseudo-proxies (as in GRL 2005 and standard paleo benchmarking), but surely has implications for it.

With that in mind here is a thumbnail of the discussion:

In the original RC discussion, David Ritson presents his “differencing” method for estimating the right amount of noise to add to obtain realistic “pseudo-proxies”. The interesting thing here is that Mike Mann ends up referring to an alternative method that does not involve differencing, although the principle (attempted disaggregation of signal and noise) is the same. Mann’s implicit argument with Ritson appears to be that the situation is more complex than low frequency = signal and high frequency = noise (and here I think TCO, Mann and I all have common ground).

The later part of the discussion involves Demetris Koutsoyiannis (I’ll return to that in a moment).

In his first off the cuff CA post, McIntyre declared Ritson “nuts”, because Ritson’s derived “red noise” model does not look like “red noise” found in real proxies. As I’ve argued above, this missed the point spectacularly. Enough said.

In the next relevant post in this particular chain (the one referred to by Lazar), McIntyre cited email correspondence with Koutsoyiannis, who jumps in to congratulate McIntyre and answer questions. But since Koutsoyiannis was also involved in the original discussion at RC, it might be more profitable for folks to look back at that, rather than the fairly one-sided discussion at CA.

But before I do that, I’m going to highlight one important aspect of Lazar’s excellent exposition. At the beginning he stated:

Now it seems to me that at least part of the argument was not purely about stationarity, but was more fundamental. If you look at the original RC post, we have Ritson, Mann and Schmidt all arguing against Koutsoyiannis.

The discussion starts here (with detailed response by Ritson).

Then Mike Mann summarizes the conflict quite succinctly:

Now there are (at least) two important points here:

1) One “side” (Ritson, Mann, Schmidt and paleoclimatologists in general) is trying to model the structure of the non-climate “noise”. The other side, Koutsoyiannis (and by extension McIntyre at CA), is modelling the entire variability of the proxies as a stochastic process. This is a much more fundamental issue than a particular method (e.g. Ritson “differencing”).

2) This discussion happened well over a year after the release of GRL 2005. So whatever the merits of the various positions, and their possible implications for the construction of appropriate random pseudoproxies for benchmarking, these considerations were not discussed in GRL 2005.

As Lazar points out, there was no rationale or justification given for the ARFIMA derived pseudo-proxies in M&M 2005. In fact, as far as I can tell, the methodology was not even clearly identified, let alone described or justified.

DC, I think you (and TCO before you) seem to miss something. The Ritson write-up is here:

Click to access nred.pdf

If you read it, you see that he actually computes the autocorrelation of the original (undifferenced) proxies; the differencing is just a “trick” to suppress low frequencies in the time series, assumed to be all signal (= forced climatic variations). Mike Mann and some commenters are talking past each other on the RC thread. Mike was not mistaken and did not correct himself; he just double-checked and then re-stated more clearly what Ritson had done. It would be nice if at least this meme could be buried here and now.

Pingback: Open Thread #2 « The Policy Lass

TCO,

You echo vS;

von Storch, H., E. Zorita, and F. González-Rouco (2009), Assessment of three temperature reconstruction methods in the virtual reality of a climate simulation, International Journal of Earth Sciences, 98, 67-82, doi:10.1007/s00531-008-0349-5.

For their pseudo-proxies they used…

a) white noise, the amplitude estimated by correlation as per your example

b) red noise generated by two methods;

i) constant red noise, by fitting an AR(2) model to GCM temperature series, and scale down the variance of the synthetic series

ii) spatially varying red noise, using an AR(1) model with lag-1 correlation drawn from a Beta distribution

c) long-term persistence, using a method I haven’t the foggiest about… using Fourier transforms

So, yes, they follow on from the comments Zorita made in 2006.

Interesting stuff!

I was thinking of compiling a list of peer-reviewed articles to drive further discussion (and get away from discussions of blogs).

But von Storch et al 2009 is as good a place as any to start (thanks, Lazar!):

> I was thinking of compiling a list of peer-reviewed articles to drive further discussion (and get away from discussions of blogs).

This could be done in collaboration of the AGW Observer:

http://agwobserver.wordpress.com/

PS: Thanks to you and Lazar for the clarifications! Somehow, this is not unrelated to the famous VS thread… 😉

If any of you can describe simply ARMA, ARIMA, ARFIMA, and their parameters, and when and why they are appropriate, and when to detrend or difference, and how all this helps us use proxies to estimate temperatures for the past 2,000 years, and then demystify the training and verification periods, (so that a congressperson could understand), you will have really accomplished something. Could analogies or simple examples help?

How do you separate signal from noise? And is there a critter called “persistent trendless red noise?

There are some decent tutorials. I would advise not teaching time series 101, but only highlighting were difference make a difference in the context of what we care about.

Huybers comment to MM is a great example of clarified a contentious issue and making a correction. I remember reading all the McI blathering about different sorts of matrices and all that. When I finally buckled down and read Huybers it became obvious that no linear algebra was needed (the normalization can be understood as a scalar). And that McI had changed “two things at once” and implicated one thing as the cause.

If you can hit the Huybers level of clarity and correctness that would be really something. The problem is not that this stuff is really that “hard” per se, but that there are so many little aspects of what Wegman, Mann and McI did (in different documents, to support different graphs, for RE computation versus for the HS demonstration, etc.) I can forsee him reading this thread now, planning some screed where he can act all pompous and spread some FUD. So…would just urge you to really be careful and staple his wings to the page…

Sure, Huybers identified a major problem in M&M 2005, namely that M&M did not follow the actual MBH methodology (in this case the standardization step). But none of the four initially submitted comments (including Huybers’s as well as Ritson’s rejected comment) appeared to have identified the key issue under discussion here – the use of the “full autocorrelation stucture” of the proxies to generate the sets of null proxies used by M&M to demonstrate the so-called mining of “hockey sticks” by Mann’s PCA methodology.

This appears to have been a result of M&M’s failure to clearly document their methodology. The problem was compounded by the use of non-standard terminology such as “persistent red noise” to refer to “long memory” statistical models, and “pseudo-proxies” to refer to null random noise proxies.

Above, willard claims to find evidence that “McKitrick’s reaction [to Ritson] seems quite normal and justified”.

I think it’s more likely that Ritson may have expressed frustration with M&M’s failure to clearly document what they had done. If you look at the timeline of the correspondence, it apparently took until November 2005 for M&M to supply to Ritson the actual individual noise model parameters (one for each of the 70 proxy series) that they derived from the actual proxies and used to build their sets of supposedly “random” null proxies. It turned out that M&M had permitted model fits to autocorrelation lags limited only by the length of the proxy series. But you have to examine the code very carefully to see that:

for (k in 1:ncol(tree)){

Data[,k]<-acf(tree[,k][!is.na(tree[,k])],N)[[1]][1:N]

}#k

(N being the length of the proxy series, 580 in this case, although strictly speaking acf() will cut it off at N-1, since that is the longest possible lag).

Then hosking.sim is used to generate a "pseudoproxy" based on the 579 lag co-efficients returned for each of the 70 real proxies, with a complete set of 70 generated 10,000 times.

for (k in 1:n) {

b[,k]<-hosking.sim(N,Data[,k])

}#k

Of course, I've already shown that Wegman clearly didn't understand what M&M had done. As far as I know the first (and only) paper to identify this issue was Amman and Wahl 2007 (recall that I showed this quote in my McShane and Wyner piece):

Click to access fulltext.pdf

Deepie:

1. It’s good to see you rake this stuff up again and especially wrt Wegman. I look forward to a clear exposition. Please be both clear and correct (I just don’t want the worm-twisting if you are not precise.) And yeah, I guess it did not make the formal comments enough (and W&A failed to really shown in a “Huybers” manner exactly the issue involved with the full acfing). I think Ritson was already WELL on this, based on his comments and stuff, but just lost heart to push it home. I was asking the right questions as well, and have made snide comments about the “signal inside the red” issue for years now, but just got sick of the equivocation. It honestly makes my muscles tense just to deal with that sort of stuff. Especiallyf rom my side, which I want to be brave and honorable. that sort of stuff, just makes we want to walk away from the climatosphere for a year or so.

2. I don’t think of it as a “true” or “single” fault. There are various choices and really various errors. This is part of the conflation and confusion fallacy from both sides. It is important to disaggregate issues and realize that you can have one thing wrong and onther thing “not wrong”, rather than all or nothing.

3. Also, at least wrt Huybers, the issue was not so much the “failure to follow MBH”, but the failure to be clear about how he had deviated (i.e. changing two things at once). Here doing the full factorial would be the way to clearly show the dependance of the answer on the choices involved. BOTH “short-centering” versus “normal centering” AND standard deviation dividing OR NOT, have impacts. It should be the easiest thing to just show the range of choices in a two-by-two full factorial (11, 10, o1, and 00).

What McI does is a rhetorical device, by conflating the two issues. Off-centering is pretty clearly “wrong” and has some effect on the PC1 regardelss of standard deviation dividing (but by changing two things at once,McI exaggerated impact).

The standard deviation dividing is more debatable, although I’m inclined to think includeing it is better (as Mann did). But if it’s debatable, one can still estimate the impact and show dependance of the solution on the choice. That’s how a real thinking, objective analyst would discuss it.

Of note, McKitrick actually ARGUES that standard deviation dividing is bad, while McI tries to play “fox” by saying he has no “take” on wehther it is right or wrong (but then why did he make the choice, why did he not show the numeric effect of each choice…rather than his CYA word games in articles?)

4. There are some other areas where McI resisted showing/sharing his methodology (I think he did eventually after months of nagging): his “recomputed RE measureemnts” for the reply to Huybers. Also his noise structure for benchmarking (this may be the same thing, can’t recall exactly). I do know I was after him for the code on the first and Burger (who is a skeptic! but got disgusted with Steve’s obfuscation) was on him for the 20 series mixed with white noise. At a minimum, these areas show evasiveness. At a maximum, there’s some other flaws mixed in there. I just don’t/didn’t have the heart to keep after this stuff, especially since the guy is just a frigging blogger and since his lack of candor (and on my side) just physically disgusts me.

TCO — I don’t get it. What ‘side’ are you on? Why have you picked a ‘side’? It seems you just hope that folks convincingly get to the bottom of the whole issue and publish a comprehensive explanation. Is there a ‘side’ associated with that?

As far as I can make out, TCO is a contrarian, with some definite interest in facts and reality, but liable to be swayed by the outside battling the cabal, or however you want to put it. Hence their great interest in McI et al. At best they can be a useful gadfly, at worst they can be a concern troll and help waste time.

He has libertarian leanings, and fights reality because he’s smart enough to understand that if climate science is correct, some sort of collective action is necessary to avoid disaster.

He’s honest about it.

Ya gotta be on a side, man. 😉

http://webcache.googleusercontent.com/search?q=cache:TiwwV2cCy3QJ:https://probabilitynotes.wordpress.com/2010/08/22/global-temperature-proxy-reconstructions-bayesian-extrapolation-of-warming-w-rjags/+%22which+side+are+you+on%22+polyistcoandbanned&cd=1&hl=en&ct=clnk&gl=us

Steve L, I’ve been asking that very same question of TCO off and on, without ever getting a very clear answer. I take it that it’s historical — he has a personal history of hanging out at McI and similar blogs, and identifying with them before getting incurably fed up. I suppose it’s over now — one may live in hope 😉

BTW TCO identifies one powerful reason why these things that DC (and John Mashey) have now uncovered, took so long to uncover:

“It honestly makes my muscles tense just to deal with that sort of stuff”

“…just physically disgusts me”

Yep. Same here. And I just cannot find the patience for something that isn’t science and won’t produce any new knowledge; that’s for lawyers, not scientists. DC and JM found the patience and the lawyerly attitude and set their disgust aside — and I was wrong: what they found is extremely interesting. In a sick sort of way.

This can get a little long:

1. Papers by Michael Mann et al. in 1998 and 1999 gave a picture, including a sudden warming in recent times, that’s been upheld by a variety of data analyses.

2. Critiques of those papers that claim a bad analysis managed to get an approximation of reality are flawed because they either attempt to discredit methods of analysis not actually used in Mann et al., or incompetently include information in their allegedly random counterexamples, or refuse to address the science, data, models or analysis and attack the authors or their institutions.

These 2 sentences account for about 90% of the hockey stick controversy over the past 12 years.

Pingback: Replication and due diligence, Wegman style | Deep Climate

DC a big thank-you to you for your perseverance on this. JM was expressing his gratitude over at Deltoid that without your efforts none of this would have come to light. I for one am most grateful that there’s a good chance that some of those who set-out to do the dirty work of the denial machine will held to account for their sins. A side benefit is that I hope that others may think twice before getting entangled in such an dishonest enterprise and wait until the plot is underway and then blow the whistle.

Wegman et al. have been hoist by their own petard. I believe that many will attribute this to many things including a lack of integrity. I hope that the ultimate damage radius envelops every one of those actively involved in what most reasonable people would believe was a conspiracy.

Pingback: Breaking: Climate skeptic Wegman under formal investigation for academic misconduct - Page 25 - Political Forum